I just waded though a lot of recent comment spam and approved the legit ones along the way. So if you posted something recently it may now appear.

Archiv des Autors: christian

Most Popular Posts and Tags

I have added a permanent page with a summary of the most popular posts on this blog. I also tried to add meaningful tags to all posts. Here are the most important tags:

Content type tags

- download — a download is available is this post

- gem — a code snippet or other copy-paste thing is available

- math — posts that are heavy on math formulae

Topic tags

- atmospheric scattering — posts related to atmospheric effects

- gamma — posts related to display gamma

- hacks of life — little shell tricks or other things

- normal mapping — posts about normal mapping

- photometry — posts related to photometry

- physically based shading — posts related to PBS (or PBR)

So, where are the stars?

In my previous rant about dynamic exposure in Elite Dangerous (which honestly applies to any other space game made to date), I made a rough calculation to predict the brightness of stars as they should realistically appear in photos taken in outer space. My prediction was, that,

- for an illumination of similar strength to that on earth,

- if the sunlit parts are properly exposed,

- and with an angular resolution of about 2 arc minutes per pixel,

then the pixel-value of a prominent star should be in the order of 1 to 3 (out of 255, in 8‑bit sRGB encoding). Since then I was curious to find some real world validation for that fact, and it seems I have now found it.

\usepackage{cmbright}

The ‘modern’ looking sans-serif font I use recently in ![]() equations on this blog is called ‘Computer Modern Bright’, and actually is not so modern at all: Designed by Walter Schmidt in 1996, it is still to date the only free sans-serif font available for

equations on this blog is called ‘Computer Modern Bright’, and actually is not so modern at all: Designed by Walter Schmidt in 1996, it is still to date the only free sans-serif font available for ![]() with full math support. Type‑1 versions of this font are available in the cm-super package, but I didn’t need to install anything, because apparently, the QuickLaTeX WordPress plugin has them already. The only thing to do was adding just one line to the preamble:

with full math support. Type‑1 versions of this font are available in the cm-super package, but I didn’t need to install anything, because apparently, the QuickLaTeX WordPress plugin has them already. The only thing to do was adding just one line to the preamble:

\usepackage{amsmath}

\usepackage{amsfonts}

\usepackage{amssymb}

\usepackage{cmbright} % computer modern bright

I also turned on the SVG images feature that was added with version 3.8 of QuickLatex, so the equations are no longer pixellated on retina displays or when zooming in! Neat, huh?

Elite Dangerous: Impressions of Deep Space Rendering

I am a backer of the upcoming Elite Dangerous game and have participated in their premium beta programme from the beginning, positively enjoying what was there at the early time. ‘Premium beta’ sounds like an oxymoron, paying a premium for an unfinished game, but it is nothing more than purchasing the same backer status as that from the Kickstarter campaign.

I came into contact with the original Elite during christmas in 1985. Compared with the progress I made back then in just two days, my recent performance in ED is lousy; I think my combat rating now would be ‘competent’.

But this will not be a gameplay review, instead I’m going to share thoughts that were inspired while playing ED, mostly about graphics and shading, things like dynamic range, surface materials, phase curves, ‘real’ photometry, and so on; so … after I loaded the game and jumped through hyperspace for the first time (actually the second time), I was greeted by this screen filling disk of hot plasma:

X‑Plane announces Physically Based Rendering

I always wondered when X‑Plane would jump on the PBR bandwagon. I like X‑Plane, I think its the best actively-developed* flight simulator out there, but I always felt that shading could be better. For instance, there is this unrealistic ‘Lambert-shaded’ world terrain texture, which becomes too dark at sunset; another is the dreaded ‘constant ambient color’ that plagues the shading of objects.

Now in this post on the X‑Plane developer blog, Ben announces that Physically Based Rendering is a future development goal, yay! Then he goes on to say that, while surface shading will be a solved problem™ because of PBR, others like participating media (clouds, atmosphere) would still need magic tricks for the foreseeable future. Weiterlesen

Journey into the Zone (Plates)

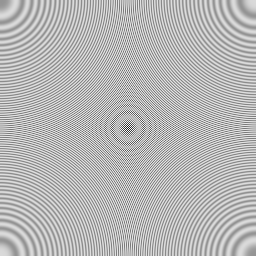

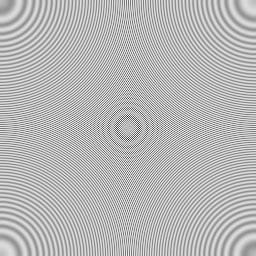

I have experimented recently with zone plates, which are the 2‑D equivalent of a chirp. Zone plates make for excellent test images to detect deficiencies in image processing algorithms or display and camera calibration. They have interesting properties: Each point on a zone plate corresponds to a unique instantaneous wave vector, and also like a gaussian a zone plate is its own Fourier transform. A quick image search (google, bing) turns up many results, but I found all of them more or less unusable, so I made my own.

Zone Plates Done Right

I made the following two 256×256 zone plates, which I am releasing into the public so they can be used by anyone freely.

Highlights from GDC 2014 presentations

This page is my personal collection of highlights from GDC 2014. I was not able to attend in person, so I had to rely on Twitter to get updated. The immersion was not perfect, but some of the thrill was definitely carried over. So here it goes (in release order): Weiterlesen

Ego mecum conjungi …

… Twitter!

So out of a whim I just embarrassed myself and tried to write in (probably wrong) latin that I joined twitter. You can follow me under: @aries_code.

If you wonder how this came about, this was my train of thought:

- Twitter has something to do with birds

- Birds have fancy latin species names

- The species name for Sparrow is Spasser domesticus

- This doesn’t sound too fancy …

- How do you say ‘I joined twitter’ in latin anyway?

But then I discovered that I am onto something: According to one argument, the brand name of Twitter should have been ‘Titiatio’, had it existed in antiquity. And according to another argument, latin should be an ideal twitter language, because it is both short and expressive.

But I digress. If you are into computer graphics, then you know of Johann Heinrich Lambert, the eponym of our beloved Lambertian refelectance law. The book where he established this law, Photometria, is written entirely in latin—now this is hardcore!

So, now you know what to do if you want to stand out in your next SIGGRAPH paper …

Yes, sRGB is like µ‑law encoding

I vaguely remember someone making a comment in a discussion about sRGB, that ran along the lines of

So then, is sRGB like µ‑law encoding?

This comment was not about the color space itself but about the specific pixel formats nowadays branded as ’sRGB’. In this case, the answer should be yes. And while the technical details are not exactly the same, that analogy with the µ‑law very much nails it.

When you think of sRGB pixel formats as nothing but a special encoding, it becomes clear that using such a format does not make you automatically “very picky of color reproduction”. This assumption was used by hardware vendors to rationalize the decision to limit the support of sRGB pixel formats to 8‑bit precision, because people “would never want” to have sRGB support for anything less. Not true! I’m going to make a case for this later. But first things first.

I’m going to make a case for this later. But first things first.