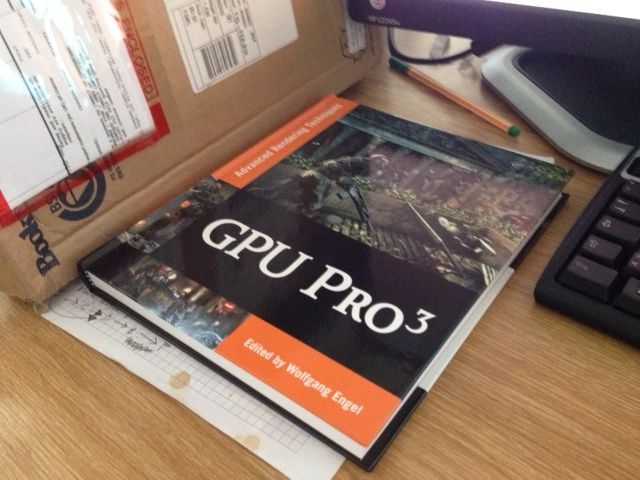

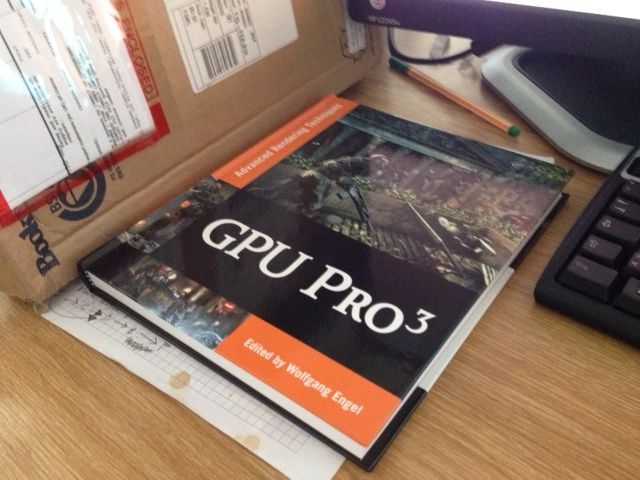

I found my copy of the book in the mail today. I was a little surprised by the moderate size—other volumes of this series were just that: volumes! I think this one is about half the size than the previous tomes. By the way, this post is a shameless plug because there is an article written by me in it. Thanks go to Wolfgang Engel, the series editor, and Christopher Oat, my section editor, and CRC press for making it possible! I will post some comments on the other articles when I read them through.

Edit:

I found one very good and comprehensive article on data driven engine design by Donald Revie. The ideas presented in there resonate very well with the designs that I found worked well in the past, so this part gets a solid +1 from me.

(I ended up with a triad of IRenderObject, IRenderGeometry and IRenderProgram, where the render object would AddBatches() to a draw list, each of which refers to one geometry containing the mesh and one program for setting the render state. For instance, a SpeedTree™ render object would typically add three batches, one for each of the branches, fronds and leaves, where each batch would pair the specific geometry with an appropriate program. The draw list is then sorted in one go via a general 128 bit sort key, and the batches rendered in order. This system is general enough that the UI system also can just AddBatches() to this list (so the UI system, as a whole, is just one instance of a render object). In this case, the individual UI geometry objects just represent views into one big dynamic vertex buffer. I probably want to explain this system in detail in another post.)

Another two very inspiring articles so far are the one about geometric postprocess antialiasing, by Emil “Humus” Persson, and the piece about global illumination using a voxel grid, from the teams at the Unis Koblenz/Magdeburg. I already read their ACM paper before and I think it is a viable method.